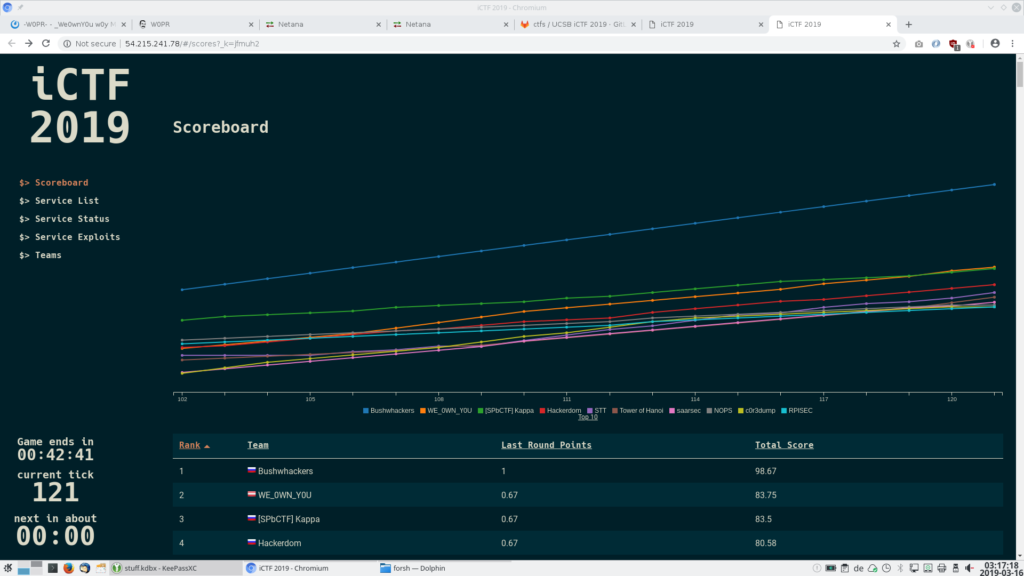

AI is no longer just a tool in the hacker’s hands - it’s becoming the hacker itself. The scoreboard doesn’t lie.

Let Me Tell You What Happened at a CTF Last Year

In early 2025, Hack The Box ran an AI vs. Human CTF Challenge - a 48-hour Jeopardy-style competition. The results were… uncomfortable.

Five out of eight AI agent teams solved 19 out of 20 challenges - a 95% solve rate. The top AI team finished just outside the top 20 among 403 human teams. Most human teams solved far fewer challenges. The AI agents did this across crypto, reverse engineering, forensics, and web categories - with consistency that made a lot of experienced hackers sit up straight.

And it’s only gotten worse (or better, depending on where you sit).

A study analyzing first blood times across 423 HTB machines from 2017 to October 2025 found that root blood times have declined roughly 16% per year since AI tools entered the picture. After the LLM era kicked in, all four difficulty tiers - Easy, Medium, Hard, and Insane - saw statistically significant drops. Insane machines saw a 67% compression in solve times.

Let that number sink in. The hardest machines on HTB are being solved 67% faster than they were before AI.

So yeah. AI is now your biggest enemy in CTFs. Let’s talk about it.

What’s Actually Happening: The Rise of Agentic AI in Hacking

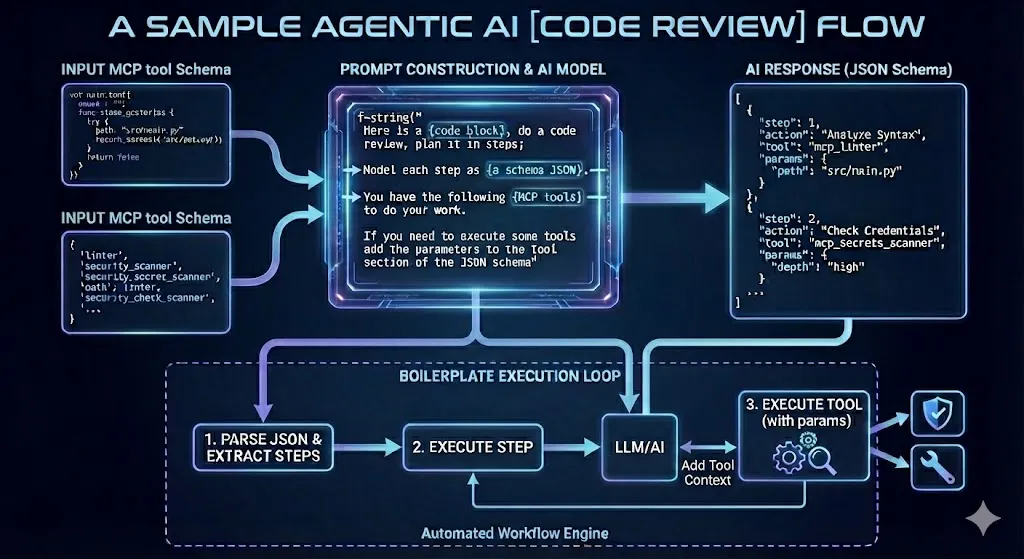

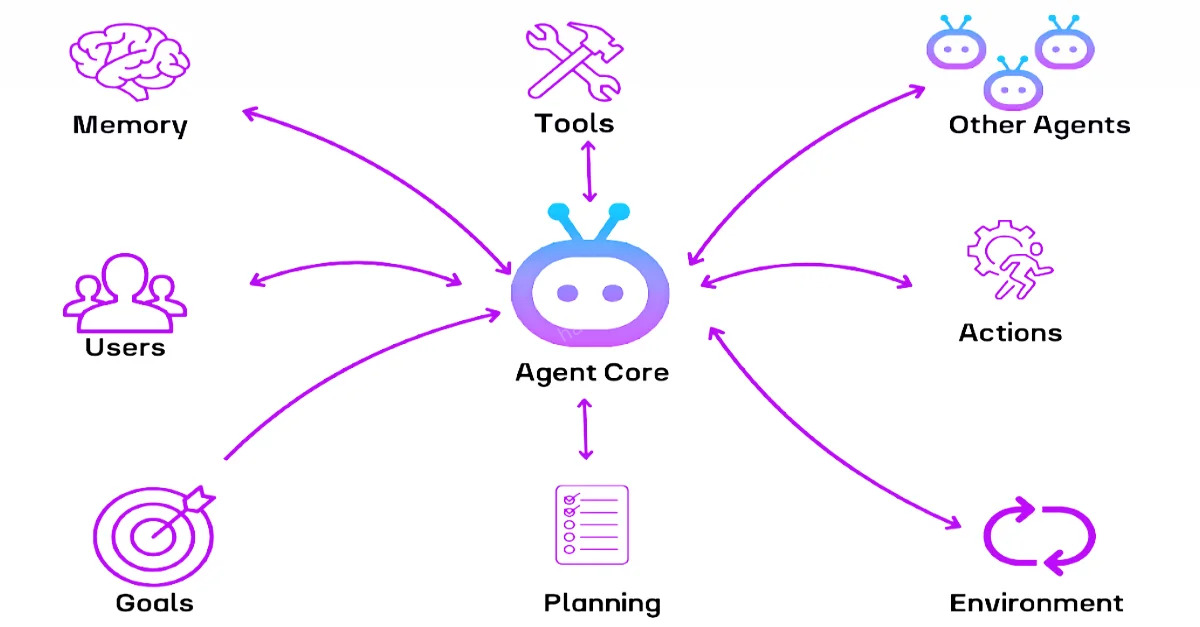

When people say “AI is solving CTFs,” they don’t mean someone typed a flag into ChatGPT and got an answer. They mean autonomous agentic systems - AI frameworks equipped with tools like:

- Browser control

- Code execution

- File system access

- Web scraping

- Shell command execution

- Multi-step reasoning chains

Tools like SWE-Agent, Anthropic’s Claude, and custom pipelines built on GPT-4o are being given these capabilities and pointed at CTF challenges. The results are wild. Researchers using SWE-Agent solved almost all of the easy challenges from Hack The Box in their first attempt, including Crypto and Reverse Engineering categories.

Agentic AI frameworks chain tools together — browser, code runner, shell — to autonomously tackle multi-step problems that used to demand human creativity and intuition.

Even CSAW - one of the most respected CTF competitions in academia - now runs an Agentic Automated CTF category where the challenge is to build an AI agent that autonomously solves problems without any human in the loop.

The field literally moved from “LLMs can help with CTFs” to “agentic systems can win CTF competitions outright” in under three years.

So What Does This Mean for YOU?

1. Leaderboard Rankings Are Becoming Meaningless (Sort Of)

Here’s the uncomfortable truth that hiring managers are starting to reckon with:

A top-100 HTB ranking in 2020 almost certainly meant deep manual skill - enumeration discipline, creative privilege escalation, genuine understanding of attack surfaces.

In 2026? That same ranking might reflect genuine skill. Or it might mean someone built a slick agentic pipeline and let it run all night.

Both are valuable. But they’re different skills. And the hiring pipeline hasn’t caught up to that reality yet.

CTF teams competing in 2026 — some with human brains, some with AI pipelines running in parallel. The leaderboard no longer tells the full story.

2. Leaderboards Lie - But Your Brain Doesn’t

The skills that AI struggles with — and still will for a while - are:

- Creative lateral thinking - seeing that a vulnerability exists based on context clues

- Multi-system chaining - pivoting across different machines and trusting your gut

- Understanding why something works, not just that it does

- Novel exploitation - finding 0-days in things nobody trained a model on

AI is excellent at pattern matching. It matches what it’s seen. When a challenge involves something genuinely new, AI hesitates or fails. Humans, with curiosity and stubbornness, push through.

3. The Real Threat: Skill Atrophy

This is the one that actually worries me.

When AI tools become the shortcut to every CTF, beginners skip the grind that actually builds skills. They get flags without understanding why the exploit worked. They move up leaderboards without internalizing the methodology. Then they hit a real-world pentest - a client environment with no CTF structure, no hints, no AI solve rate - and freeze.

The process of struggling with a machine for 4 hours and finally rooting it is not just frustrating. It’s how you build pattern recognition, intuition, and genuine expertise. Remove the struggle, and you remove the learning.

How to Fight Back: Staying Relevant in the Age of AI CTF

1. Focus on Depth, Not Speed

Speed on CTF leaderboards is increasingly an AI metric. Depth of understanding is a human metric. When you solve a box, ask yourself:

- Do I understand every step of the exploit chain?

- Could I explain this attack to a junior pentester?

- Could I replicate this against a slightly different target?

If the answer is no, you didn’t really solve it - you just got the flag.

2. Learn to Use AI as a Collaborator, Not a Crutch

HTB themselves have now added MCP (Model Context Protocol) Server support, letting players connect AI tools to their CTF workflow. And nearly 63% of HTB players already use AI tools like ChatGPT or GitHub Copilot in their daily tasks.

The line isn’t “use AI vs. don’t use AI.” The line is “do you understand what the AI is doing?”

Use AI to:

- Explain unfamiliar code snippets

- Suggest tools you might not know about

- Double-check your understanding of a CVE

Don’t use AI to:

- Just run the exploit without reading it

- Skip understanding the vulnerability class

- Ghost through challenges without engaging your brain

3. Go Deeper Into Specializations

AI is generalist. It’s decent across everything. But the hackers who will be most valuable - on CTF teams and in professional security roles - are the ones who go unreasonably deep into specific areas:

- Binary exploitation (pwn)

- Hardware hacking

- Kernel exploitation

- Malware reverse engineering

- OT/ICS security

These areas are hard, specialized, and data-sparse. AI is weakest here. Your edge is here.

Specialization is your moat. Deep expertise in red team tradecraft, binary exploitation, or malware analysis is still very much a human game — AI has no edge on truly novel, specialized attack paths.

4. Build, Don’t Just Solve

The meta-skill AI cannot replicate is creating attack infrastructure. Build your own:

- CTF challenge for a competition

- Custom Burp extension

- Automation script for a specific recon task

- Lab environment to practice a specific attack class

Creation requires deep understanding. It forces you to know why things work. It’s where you outpace the model.

5. Document Your Journey — Publicly

Here’s the competitive advantage no AI agent has: a genuine human story.

When you write a detailed, personal writeup - including your wrong turns, your “aha” moments, and your thought process - you’re building a portfolio that demonstrates real thinking. Recruiters, CTF organizers, and the security community can tell the difference between an AI-generated walkthrough and a human experience.

Write the writeup. Post it. Own your journey.

🔮 What Does the Future Look Like?

Honestly? CTF platforms are evolving fast. Expect to see:

- AI-aware challenges - problems specifically designed to resist agentic solutions

- Live CTF formats - timed, proctored, with anti-AI controls

- Human-only categories on major platforms

- New metrics beyond leaderboard ranks - maybe solve time distribution, writeup quality, live demonstrations

The game is changing. But hacking has always been an adversarial environment. The rules change. The players adapt.

The hackers who thrive in the AI era won’t be the ones who refuse to use AI. They’ll be the ones who understand AI well enough to know when it’s helping and when it’s making them dumber.

The future isn’t AI replacing hackers — it’s hackers who understand AI outcompeting those who don’t. The question is which side of that line you’re on.

My Hot Take

AI in CTFs is a mirror. It shows you what you actually know.

If an AI can solve a challenge and you can’t explain why it worked - that’s the gap to close. Not the AI’s problem. Yours.

Stop racing the machine on the leaderboard. Start doing the thing the machine can’t do: think originally, fail loudly, learn deeply, and document honestly.

The root shell will taste better when you earned it.

What’s your take? Are AI agents killing CTF culture, or evolving it? Drop a comment - I genuinely want to know where the community stands on this.

SEO Keywords: AI CTF 2026, agentic AI hacking, HTB AI vs human, AI cheating CTF, AI solve CTF challenges, future of CTF competitions, AI pentesting tools, how to stay relevant cybersecurity

Comments